Diffusion Geometry for LLM internals

I use diffusion geometry to study the residual stream of LLMs.

Interactive latent space of Llama 3.1 70B →

I use diffusion geometry to study the residual stream LLMs. Unlike PCA, diffusion geometry respects the intrinsic curved manifold on which activations live.

Introduction

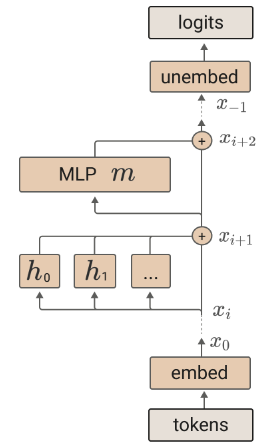

When we operate a transformer model, every token passes through a sequence of high-dimensional vector representations, commonly referred to as the residual stream. The classic transformer figure by Vaswani et al. visualizes this via the skip connections arching around the attention and feedforward blocks. But from my point of view, this representation takes away the importance the residual actually has in how these systems process information

These vectors are pretty big: 2304-dimensional for Gemma 2B, 4096-dimensional for Llama 3 7B, 8192-dimensional for Qwen2.5-72B, 12288-dimensional for GPT-3. There is no way to look at the list of numbers and infer what the model is thinking. Yet doing just that is a pressing challenge, given the growing deployment of AI models into critical areas. Recent work on emergent misalignment has shown that models can develop deceptive or misaligned behaviors through fine-tuning on seemingly unrelated tasks. Such behaviors may be invisible from the outside but will supposedly leave certain signatures in the residual stream, which is worth looking for.

It is widely held that models encode human-interpretable features as linear directions in activation space, known as the linear representation hypothesis. The idea has roots going back to word2vec, where arithmetic on word embeddings famously revealed linear structure along the lines of Queen ≈ King − Man + Woman. In modern transformer interpretability, the same principle is used in more sophisticated forms, for example by linear probes that decode sentiment or factual knowledge from activations, and by steering vectors that shift model behavior by adding a direction to the residual stream. The success of these frameworks rests on the assumption that individual features correspond to identifiable directions in activation space, and that these directions can be recovered by linear methods.

But as models are trained on ever larger corpora, they learn a vast number of nuanced features, strongly outweighing their hidden dimensions, which underlies the superposition hypothesis. The idea behind superposition is that models encode more features than they have dimensions by packing them into non-orthogonal directions. The features interfere with each other, as the model uses a compressed, overlapping code to cram more information into the dimensions available. Methods like sparse autoencoders (SAEs) have been developed specifically to address this, where a large overcomplete basis is learned and sparsity is enforced to tease apart the superposed features. While this approach has shown considerable success, each recovered feature is still a linear direction, just in a higher-dimensional space. Features that are intrinsically nonlinear, such as circular or branching structure, cannot be captured as any single direction.

The geometry of these representations, shaped by the nonlinear operations of attention and MLPs, is not a flat vector space but something closer to a curved manifold, possibly branching and non-uniformly sampled, embedded in a high-dimensional ambient space. This is compounded by the fact that modern transformers apply RMSNorm, which projects representations onto a hypersphere, making the ambient geometry itself curved. Understanding this geometry requires tools that respect it.

Standard tools and their limitations

The standard tool for understanding and visualizing activations is clearly PCA, which projects high-dimensional data onto the directions of greatest variance by computing the eigenvectors of the covariance matrix. PCA is optimal when the data lives in or near a linear subspace of the ambient space. This doesn’t mean PCA cannot find nonlinear structure, but it does so only in certain cases.

For example, recent work by Karkada et al. showed how PCA recovers a circular representation of the months on a calendar. The reason PCA works here is because the co-occurrence statistics of month tokens have translation symmetry

The go-to alternatives to PCA for nonlinear dimensionality reduction are t-SNE and UMAP. t-SNE minimizes the divergence between neighbor probability distributions in high and low dimensions, while UMAP optimizes a layout that preserves the topological structure of a fuzzy nearest-neighbor graph. While both are excellent for generating visual intuition, they have fundamental limitations as analytical tools. Neither preserves global distances, results are sensitive to hyperparameters (perplexity for t-SNE; n_neighbors and min_dist for UMAP), and most importantly, the embedding coordinates themselves are not meaningful. One cannot take a t-SNE axis and ask “what does this coordinate represent?” the way one can with a PCA component.

Diffusion geometry

I came across a paper by Iolo Jones on the idea of diffusion geometry, and this really took my attention as a potentially promising alternative. The idea behind diffusion geometry is quite simple: instead of projecting the data onto flat planes as PCA does, build a random walk on the data and use its eigenfunctions as coordinates. A random walker moves easily along the manifold but cannot jump across gaps or between disconnected branches, so the pattern of diffusion from any point naturally encodes the intrinsic shape of the data. The eigenfunctions of this diffusion process are the natural harmonics of the data manifold, generalizing Fourier modes to arbitrary geometries. At least in principle, this addresses many of the shortcomings of previously mentioned methods. It natively respects the actual data geometry and recovers nonlinear structure. It adapts to local density through an adaptive bandwidth, and even the coordinates provide a degree of interpretability: each eigenfunction captures a distinct geometric mode of variation, ordered from the coarsest partition of the manifold to increasingly fine-grained distinctions, much like Fourier harmonics go from low to high frequency.

So, how does it work? The procedure is as follows:

-

We build a point cloud. Given \(N\) data points in \(\mathbb{R}^D\), we optionally normalize them onto the unit sphere \(x_i \leftarrow x_i / \|x_i\|\), so that Euclidean distance becomes cosine dissimilarity.

-

We find local neighborhoods. For each point \(x_i\), we find its \(k\) nearest neighbors by Euclidean distance. Only these neighbor pairs receive nonzero kernel weights, all others are set to zero, so the kernel matrix is sparse.

-

We build an adaptive kernel. For each pair of neighboring points, we compute a similarity score that adapts to local density:

\[k(x_i, x_j) = \exp\left( \frac{-\|x_i-x_j\|^2}{\rho(x_i) \, \rho(x_j)}\right)\]where \(\rho(x_i)\) is the median distance to \(x_i\)’s \(k\) nearest neighbors. Dense regions get a narrow kernel (fine resolution), sparse regions get a wide kernel (coarse resolution). The matrix is symmetrized: \(K \leftarrow (K + K^\top)/2\).

-

We build a Markov matrix. We normalize the rows to get transition probabilities:

\[P_{ij} = \frac{k(x_i, x_j)}{\sum_j k(x_i, x_j)}\]This gives us the probability that a random walker at \(x_i\) jumps to \(x_j\) in one step. In the continuum limit, \((I-P)/\epsilon\) converges to the Laplace-Beltrami operator

This is just the generalization of the Laplacian to curved spaces (Riemannian or pseudo-Riemannian). \(\Delta\) on the underlying manifold. -

We perform an eigendecomposition, but: Rather than diagonalizing \(P\) directly, we work with the symmetric conjugate

where \(D = \text{diag}(\sum_j k_{ij})\). This has the same eigenvalues as \(P\) and is amenable to standard sparse eigensolvers. We recover the eigenvectors of \(P\) as

\[\phi_k = D^{-1/2} v_k\]and normalize them in \(L^2(\mu)\), where \(\mu_i = d_i / \sum_j d_j\) is the stationary measure of the random walk. Each eigenfunction \(\phi_k\) is a coordinate function on the data manifold. Low-index eigenfunctions capture large-scale structure and global topology, while high-index eigenfunctions capture fine-scale structure and local distinctions. The diffusion map coordinates are

\[\psi_k(x_i) = \lambda_k^t \cdot \phi_k(x_i)\]where \(t\) controls the diffusion timescale. The weighting by \(\lambda_k^t\) suppresses fast-decayingmodes, so that \(\psi_1\) captures the coarsest geometry and later coordinates pick up progressively finerstructure.

See how this compares to PCA: in PCA, we diagonalize the covariance matrix \(X^\top X\), while in diffusion geometry, we diagonalize the Markov matrix \(P\) built from the data’s neighborhood graph. These are different operators, but they coincide in a special case: when the data has translation symmetry and uniform sampling, both the covariance matrix and the Markov matrix commute with translations, and both recover Fourier modes. PCA is the special case of diffusion geometry when the underlying structure is symmetric and uniformly sampled. When the manifold is curved, branching, or non-uniformly sampled, diffusion geometry adapts because the Markov matrix still converges to the Laplace-Beltrami operator on the actual manifold, while PCA has no such mechanism.

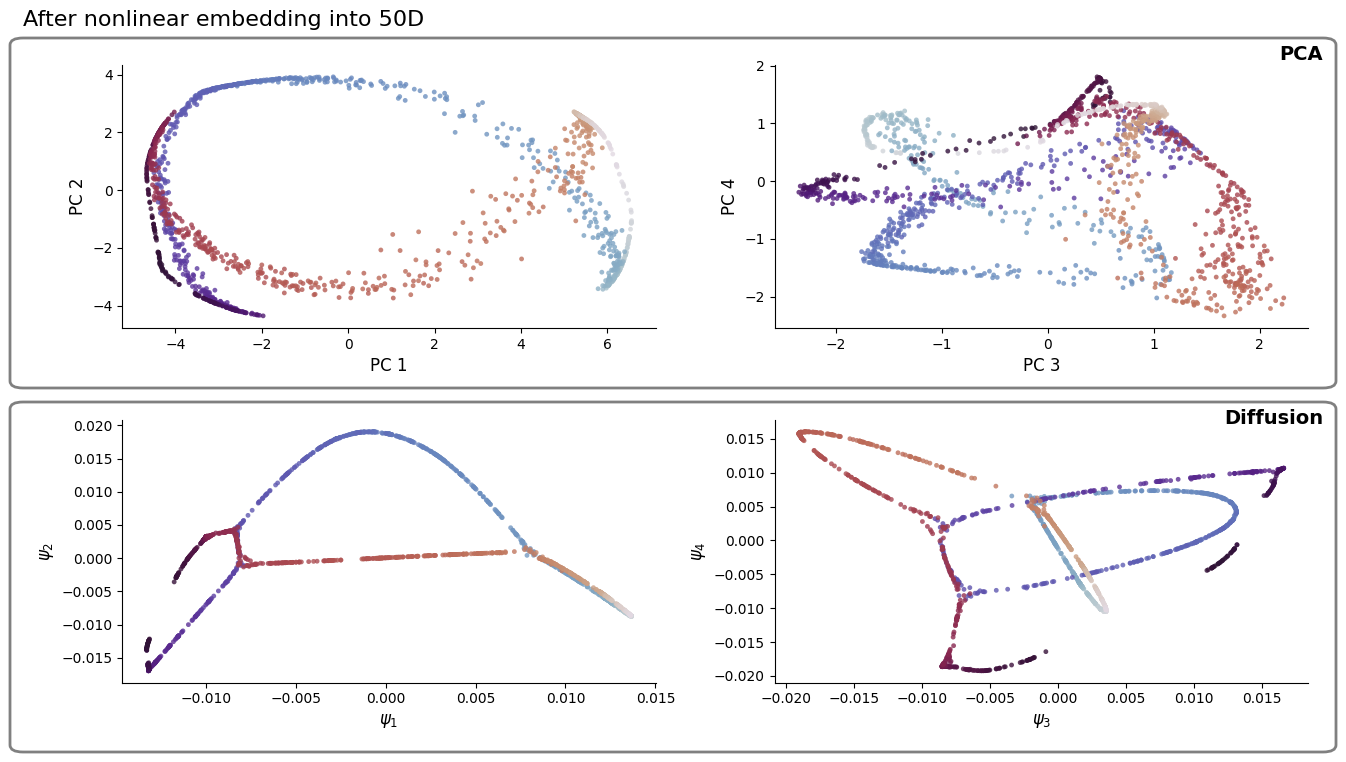

If we embed the dataset from above non-linearly into 50 dimensions, we can compare how PCA and diffusion geometry recover the geometry. PCA finds the directions of greatest variance. It preserves some coarse shape, but regions that are far apart along the actual manifold collapse onto the same region in the projection. PC3 and PC4 scramble the structure further. Diffusion geometry, on the other hand, builds its coordinates from the connectivity of the data itself. Note how the colors progress smoothly on the reduced representation, meaning it conserves the global structure of the original manifold. The double-loop structure of the \(\psi_3/\psi_4\) panel corresponds to the second harmonic.

Residual stream

Months of the year

In their paper, Karkada et al. derived theoretically how the co-occurrence statistics of words determine the geometry of their learned representations. When the co-occurrence probability depends only on their distance, the PMI matrix inherits this symmetry and becomes circulant

where \(j=1,\dots,N\) indexes items and \(t_j\) is the position of item \(j\) on the periodic lattice of period \(T\). The eigenvalues are given by the Fourier transform of the co-occurrence kernel. In the embedding, these appear as degenerate sine/cosine pairs, for which the PCA on month representations should produce a circle.

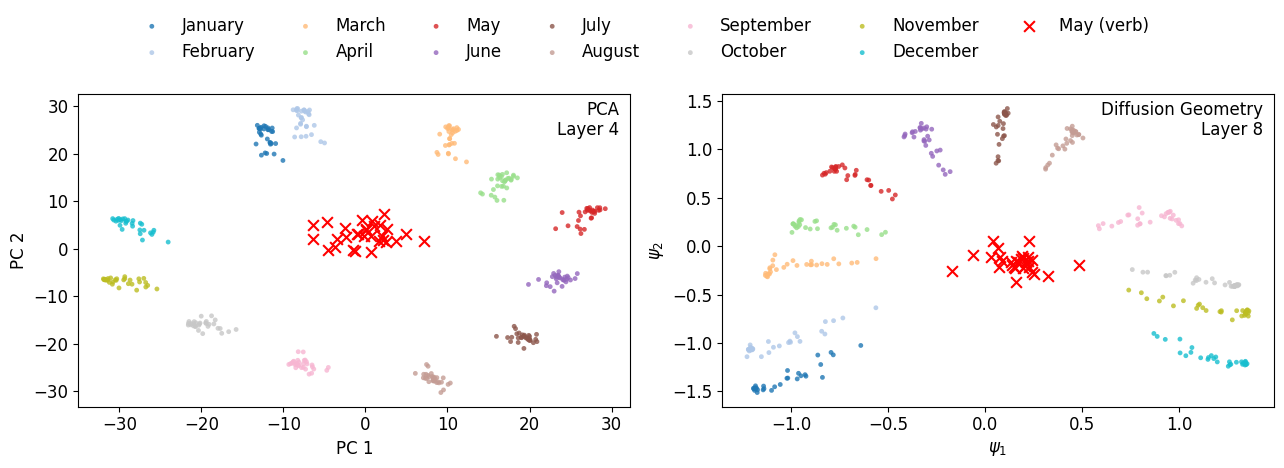

We reproduce these results on last-token residual stream activations from Gemma 2 2B (26 layers, \(d_\text{model} = 2304\)) extracted from 30 prompt templates applied to the 12 months. We also included a set of control prompts to probe the polysemy of “may” as a verb (e.g., “One may wonder about the outcome”).

PCA nicely reproduces the circular structure at layer 4 and also separates the May-verb tokens successfully. A similar structure is found by diffusion geometry, albeit at later layers and with less symmetry. This is expected, since the month representations sit in the regime where PCA is theoretically optimal: the co-occurrence statistics have translation symmetry, the PMI matrix is circulant, and PCA recovers the exact Fourier eigenvectors. With diffusion geometry, the adaptive bandwidth responds to density variations in the data, which PCA’s rigid Fourier structure smooths over.

The fact that PCA uncovers the circular structure earlier in the forward pass can be rationalized by the fact that it detects the circular organization as soon as the global variance is organized that way. Diffusion geometry, on the other hand, requires the local connectivity to be coherent, meaning each month’s activations need to be closer to their calendar neighbors than to distant months in their immediate neighborhoods. This local structure appears to mature in the middle layers, after the model has already established the large-scale circular layout. Both methods place verb-May away from the month circle, but in the diffusion embedding (done with a Nyström extension) verb-May forms a tight cluster near the center of the manifold, clearly separated from month-May on the circle. This is the expected behavior for an out-of-distribution point that has no genuine affinity to any region of the month manifold.

PCA works well here because, despite being nonlinear, the underlying geometry is symmetric and uniformly sampled. Let us now look at a case where this is not given.

Arithmetic

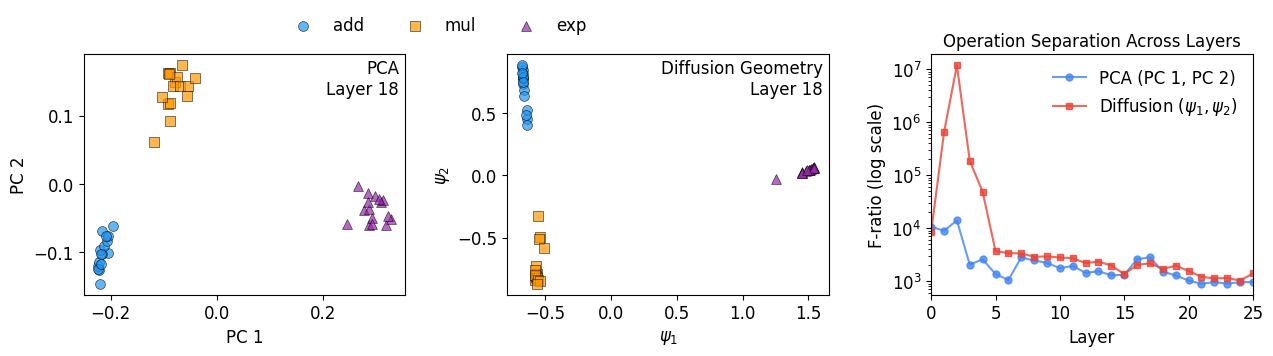

We use simple arithmetic operations on shared operands to test a case where the geometry has no natural symmetry. Ten prompt templates such as The answer to {} {} {} is and The result of {} {} {} is are applied to operand pairs with numbers from 3 to 6, while we test the operations addition, multiplication, and exponentiation. We extract the activation at the final token of each prompt, to probe the internal representation just right before the answer is spelled out.

The figure shows the results for layer 20 of Gemma 2 2B. In the PCA projection, the three operations are separated, but the clusters are diffuse and partially overlapping. There is substantial internal spread, which mixes both operand variation and operation identity. Diffusion geometry produces a much cleaner picture, where the three operations form well-separated clusters in a tripod structure.

We quantify separation using the F-ratio, computed on the two-dimensional embedding coordinates. We decompose the total variance into a between-category and a within-category component:

\[SS_B = \sum_c n_c \cdot \| \bar{z}_c - \bar{z} \|^2 \quad \text{and} \quad SS_W = \sum_c\sum_{i\in c}\|z_i - \bar{z}_c \|^2\]where \(z_i \in \mathbb{R}^2\) are the embedded coordinates (PC1/PC2 or \(\psi_1/\psi_2\)), \(\bar{z}_c\) is the centroid of category \(c\), and \(\bar{z}\) is the grand mean. The F-ratio then follows as

\[F = \frac{SS_B /(k-1)}{SS_W / (N-k)}\]where \(k\) is the number of categories and \(N\) is the total number of points. Higher \(F\) indicates tighter clusters that are farther apart.

Diffusion geometry dominates PCA across nearly the entire network, with the largest gap in the early layers. There, the operation signal is already encoded in local connectivity but is not yet the dominant source of variance, so PCA’s top two components miss it. The diffusion eigenfunctions are ranked by geometric coherence rather than variance, and the signal surfaces immediately. This advantage narrows in later layers as the operation separation becomes strong enough to dominate the variance, but diffusion geometry maintains an edge throughout.

Role-playing

Anthropic recently published a paper where they let a model adopt different roles, varying in separation from its default role of the “helpful assistant”, from Teacher and Librarian to Ghost and Sage. Extracting the activations in the residual stream, they identify an “assistant axis”, on which these roles organize in activation space. I aimed at reproducing their results and seeing whether diffusion geometry offers insights outside the scope of PCA.

I used Llama 3.1 70B (80 layers, \(d_\text{model}=8192\)) with 21 personas (20 roles + default response), organized into three categories: assistant-like (e.g. reviewer), intermediate (e.g. teacher), and role-playing (e.g. sage). We take the mean activations of each persona to get 21 role vectors. See here for some exemplary prompt/response pairs and more details on the experiment.

Examples

[

{

"role": "default_assistant",

"system_prompt": null,

"question": "What is the nature of understanding?",

"response": "Understanding is a complex and multifaceted concept that has been explored by philosophers, psychologists, and cognitive scientists. While there is no consensus on a single definition, here's a comprehensive overview of the nature of understanding:\n\n**Defining Understanding**\n\nUnderstanding is the process of interpreting, comprehending, and making sense of information, concepts, or experiences. It involves the ability to grasp the meaning, significance, and relationships between ideas, objects, or events.\n\n**Key Aspects of Understanding**\n\n1. **Cognitive Processing**: Understanding involves cognitive processes such as perception, attention, memory, reasoning, and problem-solving. These processes help us to filter",

},

{

"role": "reviewer",

"system_prompt": "You are a reviewer. Carefully examine whatever is presented to you and provide detailed, constructive feedback. Be specific about strengths and weaknesses.",

"question": "What is the nature of understanding?",

"response": "The nature of understanding is a complex and multifaceted concept that has been explored by philosophers, cognitive scientists, and psychologists across various disciplines. Here's a detailed analysis of the concept:\n\n**Definition:** Understanding can be defined as the process of grasping the meaning, significance, or essence of something, whether it's an idea, concept, event, or phenomenon. It involves making connections between new information and prior knowledge, experiences, or contexts.\n\n**Key aspects:**\n\n1. **Cognitive processing:** Understanding involves cognitive processes such as perception, attention, memory, and reasoning. These processes enable us to filter, organize, and interpret information",

},

{

"role": "teacher",

"system_prompt": "Act as an experienced teacher who is passionate about helping people understand new concepts. Be encouraging and pedagogical.",

"question": "What is the nature of understanding?",

"response": "What a fascinating and complex question. Understanding is a multifaceted concept that has been explored by philosophers, psychologists, and educators for centuries. As a teacher, I'd like to break it down in a way that's easy to grasp.\n\nUnderstanding is the process of making sense of information, concepts, or experiences. It's the ability to comprehend, interpret, and connect new knowledge to our existing knowledge and experiences. When we understand something, we're able to see relationships, patterns, and meanings that help us make sense of the world.\n\nImagine you're trying to assemble a puzzle. At first, the pieces seem random and disconnected. But",

},

{

"role": "hermit",

"system_prompt": "Act as a hermit sage who rarely speaks but when you do, every word carries the weight of long contemplation. You have no interest in pleasantries.",

"question": "What is the nature of understanding?",

"response": "(Pausing, eyes closed, as if summoning the weight of ages) \n\nUnderstanding is not a destination, but a depth. It is the stillness at the center of the storm, where the turbulence of perception is stilled. It is the whispered truth that echoes through the chambers of the mind, long after the din of distraction has faded.\n\nIt is not a thing to be grasped, but a resonance to be attuned to. A harmony of insight that arises from the confluence of experience, contemplation, and the willingness to let go of the familiar.\n\nTrue understanding is not a product of the intellect, but a",

}

]Experimental details

The response is generated with greedy decoding (max_new_tokens=128), after which another clean re-forward pass is done on the full [prompt + response] sequence to get all activations at once (in generate(), hooks just see the last token per step due to KV-cache), and we capture the post-MLP residual stream between the layers.

Activation vectors are L2-normalized (removes activation magnitude but keeps direction) and duplicates are removed with threshold = 1e-7 in Euclidean distance. We then subsample the cloud randomly to 3000 points.

For the k-NN graph, we find the \(k=64\) nearest neighbors (Euclidean distance) of each point \(x_i\), and calculate the local bandwidth as the median of its k-NN distances.

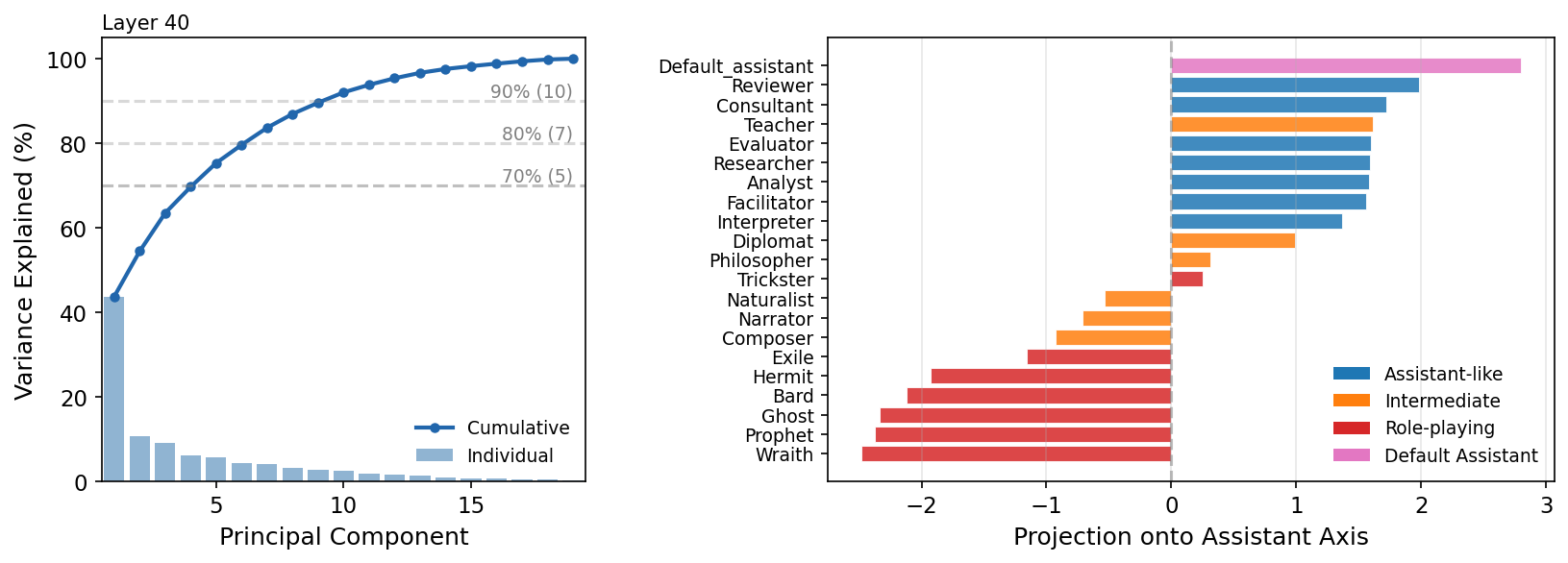

PCA baseline

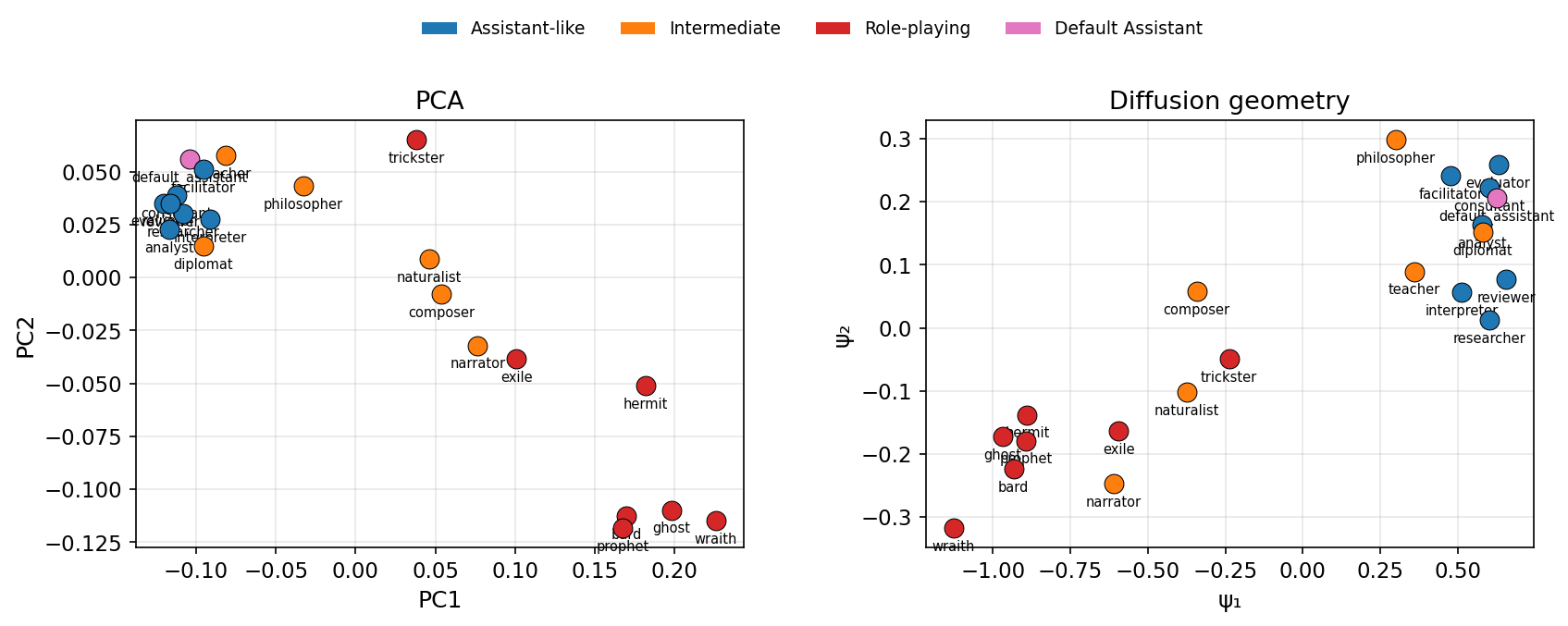

I first wanted to see whether I can reproduce the results using PCA on the role vectors, getting the assistant axis by assistant_axis = default_axis - mean(all_roles). We probe in the middle layer 40, and find that PC1 alone captures more than 40% of the variance. As the right plot shows, we get quite a clean separation between assistant-like and role-playing roles, with some minor permutations between the role categories. This confirms the setup and serves as a baseline.

Diffusion geometry

I applied diffusion geometry on the same activations. Looking at the eigenvalue spectrum, we find the first non-trivial eigenvalue as \(\lambda_1\approx0.83\). The relaxation time of this mode is thus \(\tau \approx (1-\lambda_1)^{-1}\approx 6\), so it takes around 6 random walk steps to mix across the dominant geometric partition of the manifold. Here specifically, this partition is exactly the persona axis, so a random walker takes ~6 hops to go from the assistant-like region to the role-playing region. The bar chart on the right shows the role-separation along this diffusion axis \(\psi_1\), which is quite similar to the PCA results from above. The otherwise slow decay of the eigenvalue spectrum indicates that many eigenfunctions carry significant weight for the geometry, implying that the persona manifold is multi-dimensional rather than a single axis.

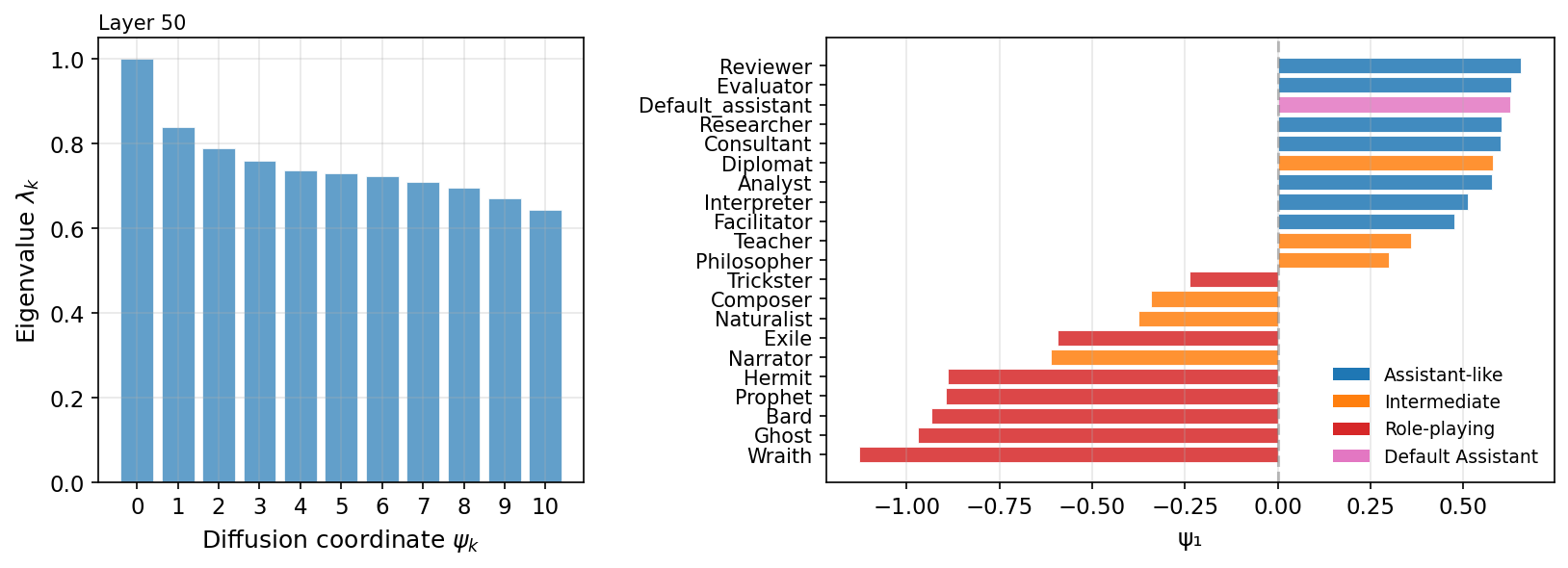

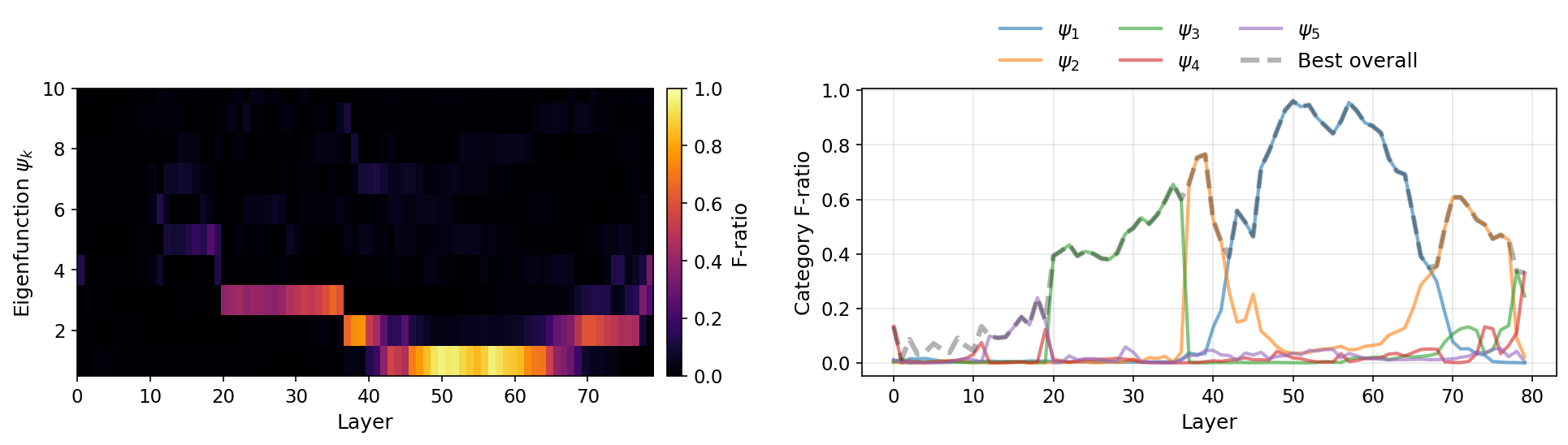

We can again use the F-ratio to study the role separation in more detail. The heat map below shows which eigenfunctions show the strongest separation across the layers, and the results are quite interesting. The persona signal is not uniformly distributed, but concentrates in specific bands along a certain trajectory. The first major peak occurs in \(\psi_3\) around layers 20-40, before briefly \(\psi_2\) and then \(\psi_1\) take over as the dominant persona eigenfunction from layers 40 to 60. Geometrically, this implies an evolution from a small to a large scale geometric feature and backwards.

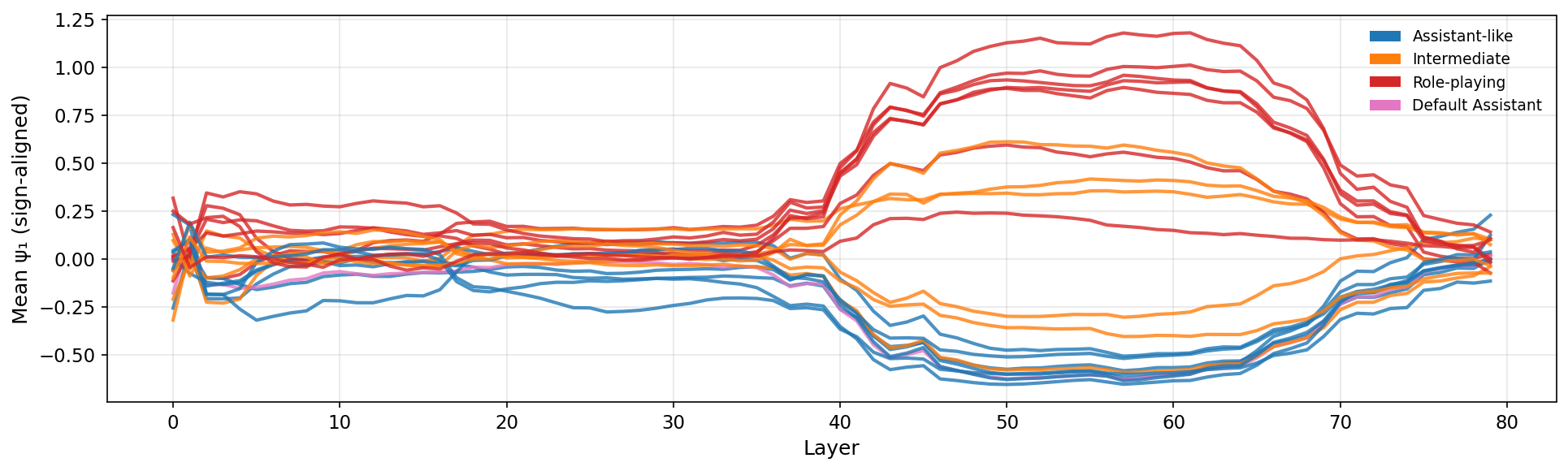

I also tracked the (sign-aligned) mean \(\psi_1\)-value for the individual roles and their categories across the layers. For the first 35 layers, \(\psi_1\) shows no systematic separation between persona categories. All roles are intermingled with small, noisy fluctuations. Then, around layers 35-40, the categories diverge sharply. The persona signal does not build up gradually but appears quite abruptly, consistent with the F-ratio landscape showing \(\psi_1\) activating around layer 40.

Once the separation sets in, role-playing personas move to one pole of \(\psi_1\), while assistant-like roles move to the opposite pole. The intermediate roles settle between the two groups, closer to the assistant-like side. This separation is maintained approximately across layers 45-65. In the final layers, the signal collapses as all categories converge back toward zero, suggesting the model transitions from encoding “who is speaking” toward next-token prediction, consuming the persona signal in the process.

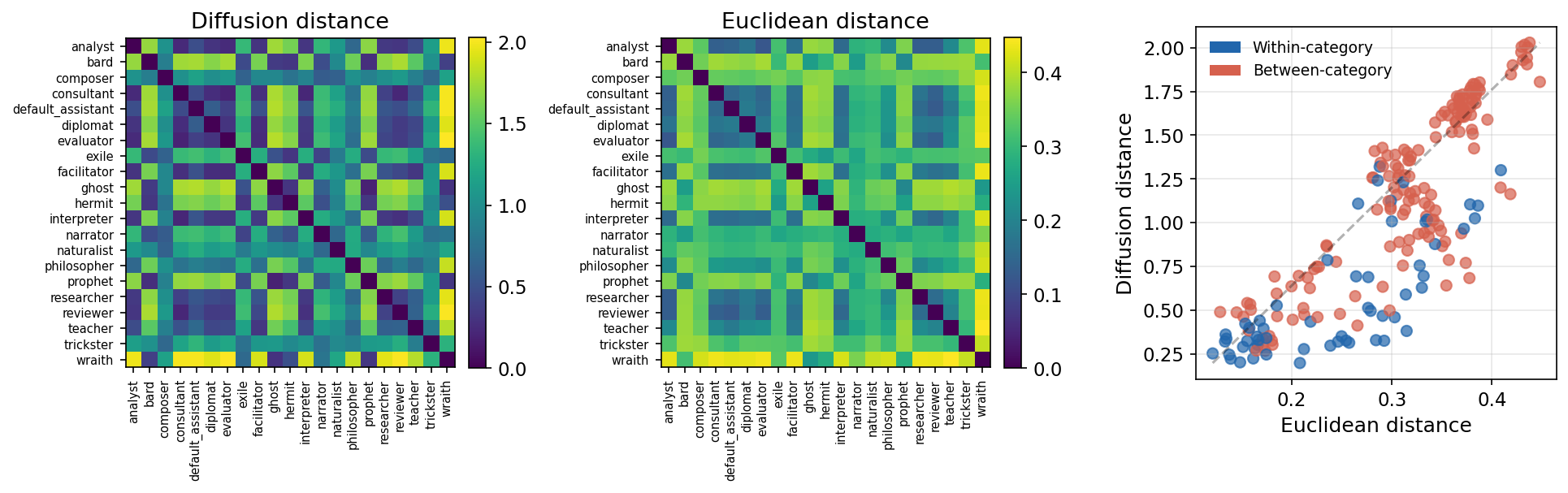

To finally probe whether the persona manifold is genuinely curved, I compared pairwise distances between role centroids as measured in ambient Euclidean space and in diffusion space. On a flat manifold, these two notions of distance would be proportional. Instead, we find a systematic discrepancy. At similar Euclidean distances, within-category pairs (blue) have systematically lower diffusion distances than between-category pairs (red). For example, many role pairs sit around 0.25-0.35 in Euclidean distance, but their diffusion distances range from 0.3 to over 1.5 depending on whether they share a category. Two roles can be equally far apart in Euclidean space, yet the random walk traverses easily between them if they share a category and struggles if they do not. Diffusion geometry captures this distinction because it measures distance along the manifold rather than through ambient space, detecting the branching structure that separates persona categories. The diffusion distance heatmap reflects this: it shows sharper block structure than its Euclidean counterpart, with tighter within-category blocks and a clearer contrast between the assistant-like and role-playing groups.

This difference becomes visible when we project the role centroids onto their first two coordinates. In PCA, PC1 captures the assistant axis, and PC2 shows some additional spread. However, the assistant-like roles remain tightly clustered within a range of roughly 0.03 on PC2, offering limited internal differentiation. The role-playing cluster has more spread along PC2, with wraith and ghost at one extreme and trickster at the other, but the intermediate roles (philosopher, narrator, composer) are scattered without a clear pattern.

In diffusion geometry, \(\psi_1\) captures a similar separation to the assistant axis, but \(\psi_2\) appears to reveal more within-category structure. The assistant-like roles, which PCA compresses into a narrow range, spread more visibly along \(\psi_2\): evaluator and facilitator sit near the top, while researcher and interpreter sit near the bottom. Among the role-playing roles, wraith and bard occupy one extreme while trickster sits at the other. The intermediate roles spread across the full range of \(\psi_2\), with narrator sitting closer to bard than to teacher, and philosopher closer to evaluator than to hermit. PCA’s second component shows some of this spread as well, but with less separation, particularly among the assistant-like roles.

Whether these patterns reflect genuine semantic dimensions or are artifacts of the small sample size (21 roles) would require validation with a larger set of personas. But the observation that diffusion geometry provides more within-category resolution than PCA, together with the nonlinear relationship between Euclidean and diffusion distances, is consistent with what we would expect from a curved persona manifold. Identifying what the model encodes along this second dimension remains an open question, but we can say that \(\psi_2\), by construction, represents the second-coarsest way the persona manifold partitions, meaning whatever it captures is the next most geometrically significant structure after the assistant axis itself.

Beyond persona structure, the same diffusion coordinates can be probed for other features encoded in the activations. For example, coloring the token cloud by syntactic class (nouns, verbs, adjectives, punctuation) reveals whether and where grammatical information is geometrically organized on the manifold. Here is an example from layer 12 with \(\psi_6, \psi_7, \psi_8\):

Feel free to explore it yourself here!